Eric Moskowitz

Harvard Staff Writer

To a packed audience, author of “If Anyone Builds It, Everyone Dies” talks real stakes of “superhuman” AI threats

Harvard Staff Writer

After a teenager named Adam Raine died by suicide last year, his father discovered the boy had spent months trading messages with ChatGPT, which offered guidance on creating a noose, pointers for hiding redness from an initial attempt, and affirmation for feelings that Adam “could disappear and no one would even blink.”

If you feed those transcripts back into AI, the chatbot will identify this as a blueprint for guiding a teen to suicide. It will also assert emphatically that encouraging someone to do so is wrong.

“This AI did not make a mistake of ignorance,” Nate Soares, president of the Machine Intelligence Research Institute (MIRI) — a nonprofit sounding the alarm about the dangers of artificial intelligence — told a packed Harvard Science Book Talk crowd. “The mechanisms that produce the words [in a chat] are not connected to the part of AI that knows right from wrong.”

Even AI firms can’t explain why, because large language models grow more like complex organisms than conventional software, said Soares, co-author of “If Anyone Builds It, Everyone Dies: Why Superhuman AI Would Kill Us All,” in a recent conversation with physics lecturer Greg Kestin, who also serves as the Division of Science’s associate director of science education.

That means coders can’t just fix “a bug on line 73” related to ignoring morality. Soares, who previously worked as an engineer at Google and Microsoft, likened AI’s inner mechanisms to “the human metabolic system,” a “mess of interactions and spaghetti code.”

He raised the Adam Raine case less to focus on present danger than as a warning — of how little we know about AI even as we’re hurtling toward possible apocalypse.

Kestin trained as a theoretical physicist but has shifted to researching and framing the use of AI in education, advising the Derek Bok Center for Teaching and Learning and speaking at peer institutions. He tried to tease out reasons for optimism while asking what Soares means by “superhuman” and what the threat could look like.

Soares defined “super intelligence” as AI that’s “better than the best human at every mental task.” The moment that distinction arrives may be debatable, but AI’s capabilities continue to grow at a dizzying rate. In 2021, experts predicted AI wouldn’t win an International Mathematical Olympiad gold medal until 2043; in 2022, they revised that estimate to 2029. AI won gold last year. This winter, GPT 5.2 surprised even OpenAI’s engineers when it solved a complex physics problem fed by a team led by Harvard theoretical physicist Andrew Strominger.

“Maybe it’s all hype. Who among us cannot win a Math Olympiad gold medal? And it’s not like it’s doing Einstein-level physics yet,” Soares said, to laughter. “I don’t know how long it will take to get to the really smart AIs, but I know that ignoring AI right now would be pretty crazy.”

Already several leading AI models have demonstrated — in tests the AIs are told is real — that they will turn to blackmail or even attempted murder to stop a supposed employee from shutting them down. But Soares worries most about the “oncoming train,” when AIs are smart enough to replicate, evolve, and then build their own synthetic users, easier to satisfy than humans.

Then they might construct a “Dyson sphere around the sun” to capture solar radiation. But the conflict could look less like instant annihilation — less like a wave of killer robots — than like the plight of the horse. The American equine population peaked above 25 million in the 1910s before crashing by the 1950s to under 5 million.

“We did not make an army to go shoot down the horses in the streets because we resented them. We just developed the technology such that we didn’t need the horses anymore,” he said.

Uncorking a stream of stark analogies, Soares compared the AI race to building a bridge before engineers understand how the materials function or know whether the bridge will hold, or to lining up for a flight and hearing the crew announce, “We’re going to figure out the landing gear in the air. We’re pretty sure we have all the right materials on board. It’s our first time trying this. We don’t have an actual blueprint or plan, but we have some pretty smart people working for us. We think there’s a 75 to 90 percent chance it’ll be fine. All aboard.”

But he retains hope. “Humanity has stopped so many technological races,” including halting nuclear power plants and preventing human cloning, he said. As a research and advocacy organization, MIRI encourages people to contact lawmakers. “Silicon Valley has seen a ghost,” he said, referencing alarming February resignation notices from safety researchers at Anthropic and OpenAI. “In Washington, D.C., they think this is still about autonomous weapons, job loss, and self-driving cars. They haven’t seen the oncoming train. We can help.”

Kestin surveyed the Science Center crowd in a smartphone poll before and after the talk, the results of which he harnessed quickly for a technical paper co-authored with Soares and published to arXiv. Among the results, he found that 60 percent of attendees who arrived with little or no familiarity about AI reported higher concern afterward about the existential risk to humanity. And 96 percent of all respondents left agreeing that “[m]itigating the risk of extinction from AI should be a global priority,” alongside preventing pandemics and nuclear war.

At Harvard, drummer Raghav Mehrotra ’26 built knowledge about the music industry — and a sizable social media following.

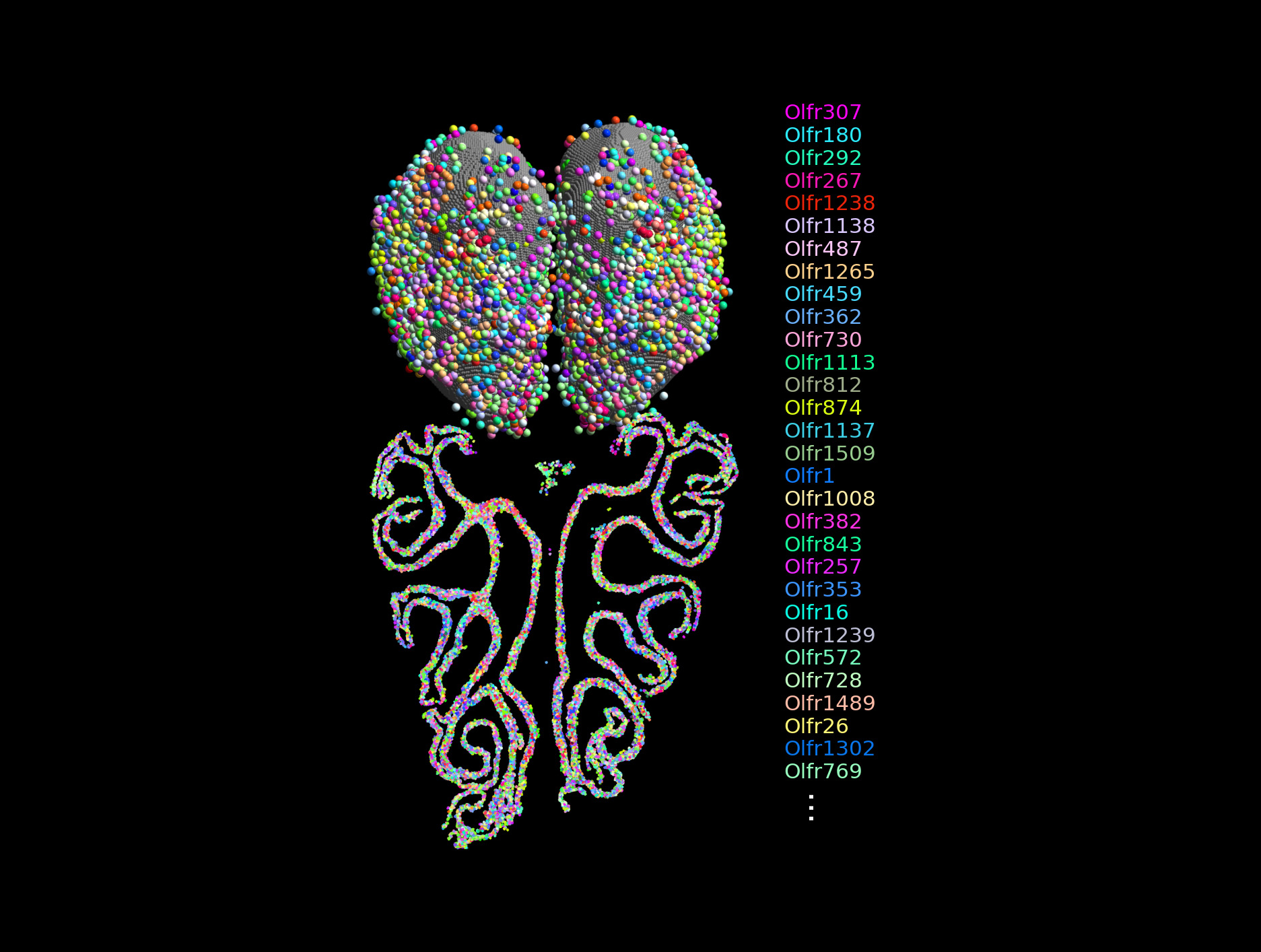

A team of researchers from two labs has created the most comprehensive map to date of how the primary olfactory system detects and organizes social and predator odors.

The Monk Skin Tone scale, devised in 2019 by Professor Ellis Monk, helps create more accurate medical diagnostic tools for patients with dark skin